As featured in Azure Spring Clean 2026

The problem with small DevOps teams

Running a GitHub organisation with dozens of repositories sounds manageable… until your DevOps team is two people. Every new project starts the same way: someone sends a message, you manually create a repo, configure permissions, set up topics. Repeat.

The work is not hard. It is repetitive, error-prone, and scales poorly. Worse, it creates a human bottleneck: nothing moves until someone on the DevOps team has time.

We needed a system where:

- Anyone can request infrastructure through a self-service form

- The request produces production-ready code changes automatically

- A DevOps engineer only needs to review and approve

No manual YAML editing. No Teams messages asking “can you create a repo for me?“. Just a form, automation, and a pull request.

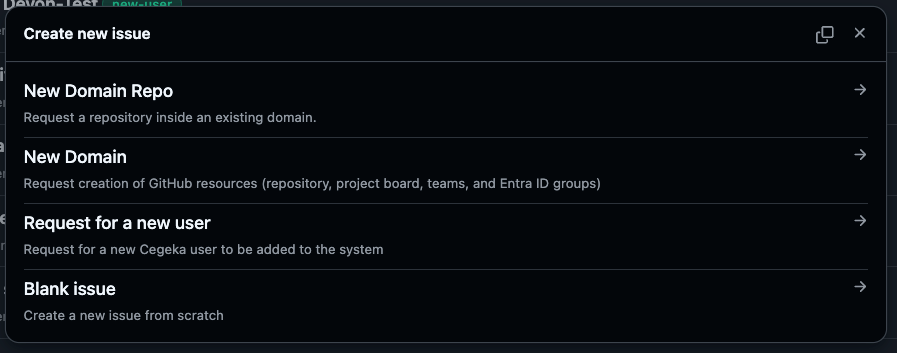

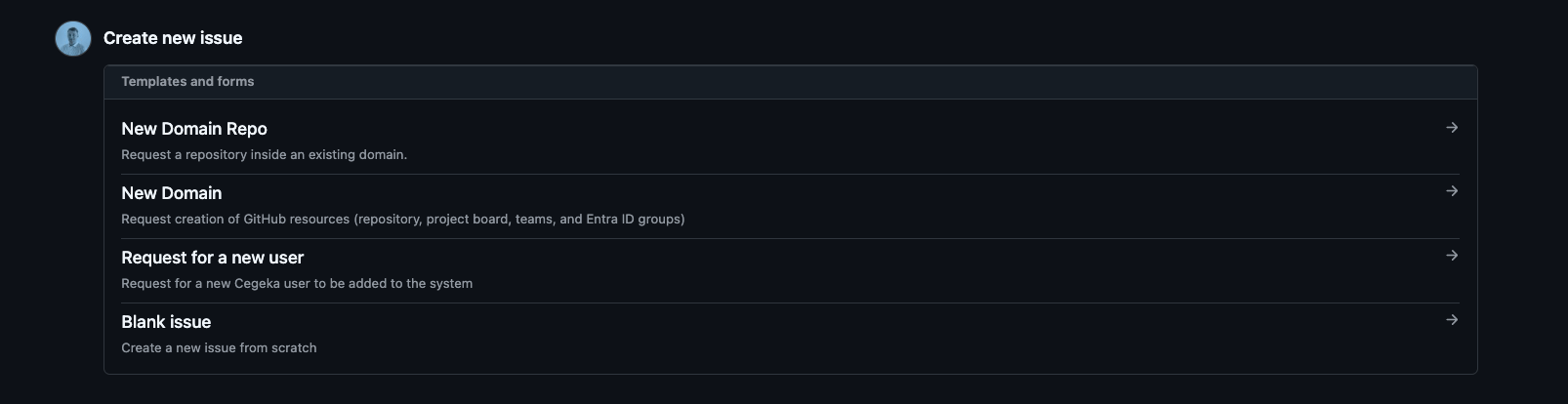

The solution: issue forms as a service catalog

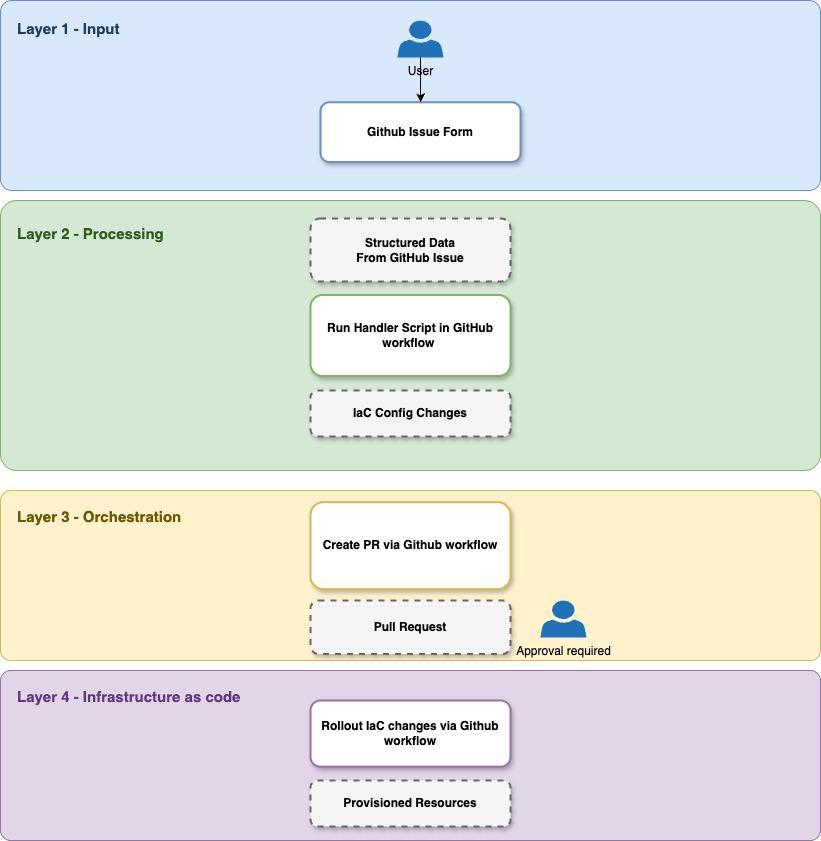

The architecture has four layers, each with a single responsibility:

| Layer | Tool | Responsibility |

| Input | GitHub Issue Form | Structured data capture |

| Processing | Python script | Parse issue, update config files |

| Orchestration | GitHub Actions | Branch, commit, PR creation |

| Infrastructure | Terraform | Actual resource provisioning |

We will walk through each layer using a simple example: requesting a new GitHub repository through a form.

Layer 1: The issue form

GitHub issue templates support YAML-based forms that render as structured input fields. This eliminates freeform text parsing entirely.

1name: New Repository2description: Request creation of a new GitHub repository3title: "New Repo"4labels: ["new-repo"]5body:6 - type: markdown7 attributes:8 value: |9 ## What gets created?10 A new private GitHub repository with the specified name and topics.1112 - type: input13 id: repo_name14 attributes:15 label: Name of the new repo16 description: |17 Use lowercase with hyphens, avoid spaces.18 placeholder: "my-new-service"19 validations:20 required: true2122 - type: input23 id: topics24 attributes:25 label: Repository Topics26 description: "Comma-separated tags for categorization"27 placeholder: "python, api, data-processing"28 validations:29 required: false

The labels field is critical: it is how the automation identifies which handler to invoke. Each template type gets a unique label.

The markdown block at the top acts as inline documentation. Users see exactly what will be created before they submit.

Layer 2: The Python issue handler

A single Python script serves as the processing engine. It receives the raw issue JSON, detects the template type from labels, and runs the matching handler.

Template detection

1TEMPLATES = {2 'new-repo': {'handler': handle_new_repo, 'branch_prefix': 'new-repo'},3 'new-user': {'handler': handle_new_user, 'branch_prefix': 'new-user'},4 # add more templates here5}67def detect_template(labels: list[str]) -> Optional[str]:8 for label in labels:9 if label in TEMPLATES:10 return label11 return None

Adding a new template type means adding one entry to TEMPLATES and implementing its handler function. Nothing else changes.

Field parsing

GitHub issue forms render as markdown with ### Label headings. The parser extracts values using regex:

1def parse_field(issue_body: str, label: str) -> Optional[str]:2 pattern = rf'###\s*{re.escape(label)}\s*\n\s*(.+)'3 match = re.search(pattern, issue_body, re.IGNORECASE)4 if match:5 value = match.group(1).strip()6 return value if value and value != '_No response_' else None7 return None

The repo handler

The handler parses the issue, then writes structured data into a JSON config file that Terraform consumes:

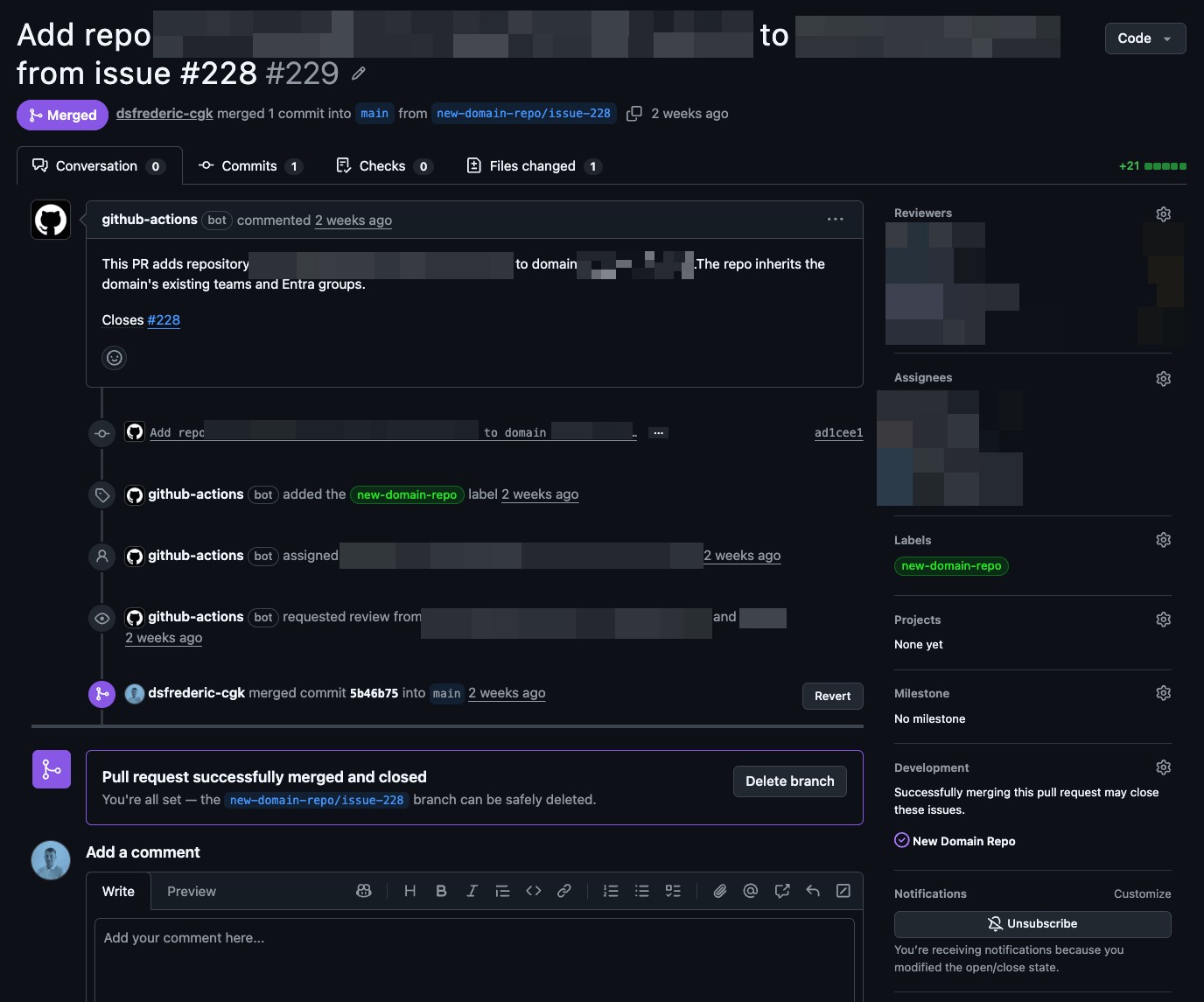

1def handle_new_repo(issue_body: str, issue_number: int) -> dict:2 repo_name = parse_field(issue_body, 'Name of the new repo')3 topics = parse_list(issue_body, 'Repository Topics')45 if not repo_name:6 raise ValueError("Repository name is required")78 data = load_json('infra/github_mgmt/input.json')910 if repo_name in data.get('repos', {}):11 raise ValueError(f"Repository '{repo_name}' already exists")1213 data['repos'][repo_name] = {14 'repo_name': repo_name,15 'visibility': 'private',16 'topics': topics,17 }1819 save_json('infra/github_mgmt/input.json', data)2021 return {22 'files': 'infra/github_mgmt/input.json',23 'commit_msg': f"Add repo {repo_name}",24 'pr_title': f"Add repo {repo_name} from issue #{issue_number}",25 'pr_body': f"Adds repository **{repo_name}**. Closes #{issue_number}"26 }

The handler updates one JSON file and returns metadata (branch name, commit message, and PR details). It owns no git logic.

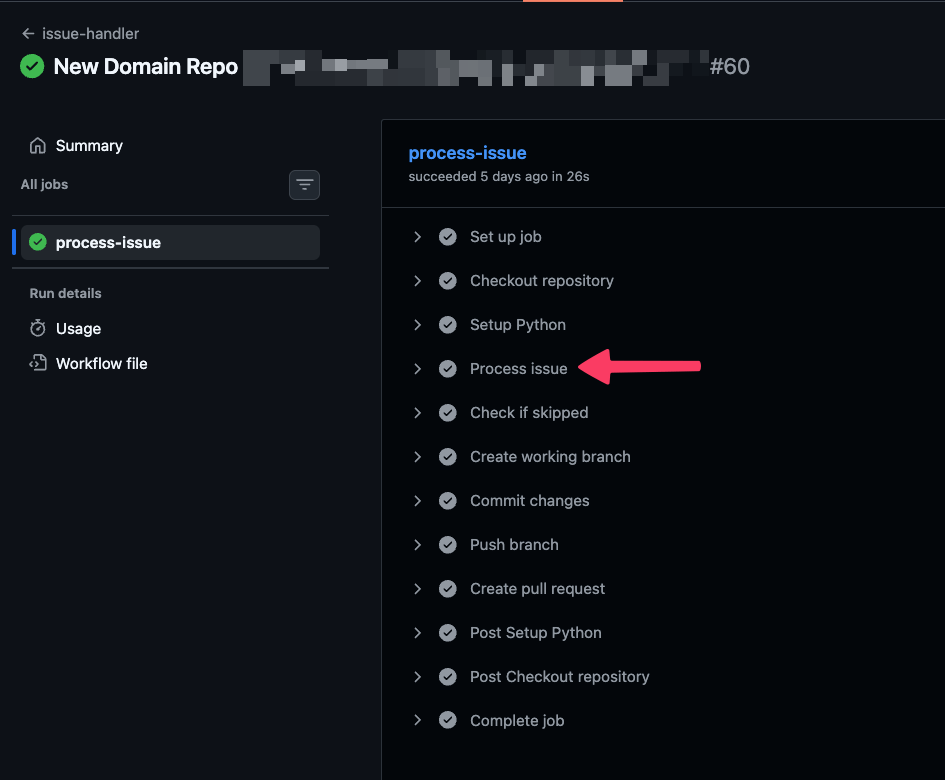

Layer 3: The GitHub Actions workflow

The workflow ties everything together. It triggers on issue events, runs the Python handler, and creates a PR from the result:

1name: issue-handler2on:3 issues:4 types: [opened, reopened, edited]56permissions:7 contents: write8 pull-requests: write9 issues: read1011jobs:12 process-issue:13 runs-on: ubuntu-latest14 steps:15 - uses: actions/checkout@v416 - uses: actions/setup-python@v517 with:18 python-version: "3.11"1920 - name: Process issue21 id: process22 run: |23 issue_json='${{ toJson(github.event.issue) }}'24 result=$(python .github/scripts/issue_handler.py "$issue_json")25 echo "result=$result" >> $GITHUB_OUTPUT26 echo "branch_prefix=$(echo "$result" | jq -r '.branch_prefix')" >> $GITHUB_ENV27 echo "files_to_add=$(echo "$result" | jq -r '.files_to_add')" >> $GITHUB_ENV28 echo "commit_msg=$(echo "$result" | jq -r '.commit_msg')" >> $GITHUB_ENV29 echo "pr_title=$(echo "$result" | jq -r '.pr_title')" >> $GITHUB_ENV3031 - name: Create branch, commit, and open PR32 if: env.skip != 'true'33 env:34 GITHUB_TOKEN: ${{ github.token }}35 run: |36 git checkout -b "$branch_prefix/issue-${{ github.event.issue.number }}"37 git add "$files_to_add"38 git commit -m "$commit_msg"39 git push --set-upstream origin "$branch_prefix/issue-${{ github.event.issue.number }}"40 gh pr create --title "$pr_title" --body-file <(echo "$RESULT_JSON" | jq -r '.pr_body')

The workflow handles the git plumbing: branch naming, commit messages, PR creation with labels and assignees. The Python script stays focused on business logic.

Layer 4: Terraform, from JSON to infrastructure

The input.json file modified by the Python handler is the single source of truth for Terraform. The root module loads it and iterates over each entry:

1locals {2 config = jsondecode(file("${path.module}/input.json"))3 repos = local.config.repos4}56module "github_repo" {7 source = "./modules/github_repo"8 for_each = local.repos910 repo_name = each.value.repo_name11 visibility = each.value.visibility12 topics = each.value.topics13}

The repo module creates the actual GitHub resource:

1resource "github_repository" "repo" {2 name = var.repo_name3 visibility = var.visibility4 topics = var.topics5 auto_init = true6 delete_branch_on_merge = true7}

When the PR merges and Terraform applies, the repository gets created. In our real setup, the same pattern provisions additional resources: Entra ID security groups, GitHub teams with Entra sync, and project boards, all driven from the same JSON file.

Adding new template types

The system is designed to be extended. Adding a new request type requires:

- A new

.ymlissue template with a unique label - A new handler function in the Python script

- A new entry in the

TEMPLATESdictionary

No workflow changes. No Terraform changes. The existing modules handle the new resources.

Future improvements

Agentic AI as the intake layer. The current system requires users to fill in a structured form. The next step is letting users send a plain-text request, whether through email or a Microsoft Teams message, and having an AI agent parse the intent, fill the form fields, and submit the issue automatically. The form becomes a machine-to-machine interface rather than a human one.

GitHub Copilot Coding Agent for edge cases. Templates work well for predictable requests. For one-off infrastructure changes that do not fit a template, the Copilot coding agent can be pointed at an issue, explore the existing Terraform codebase, and propose changes following established patterns. An AGENTS.md file in the repository provides the agent with conventions and constraints, improving output quality.

Wrapping up

The pattern shown here is not specific to GitHub repositories. The general approach (structured forms driving declarative config files consumed by infrastructure-as-code) works anywhere you have repeatable infrastructure requests.

The key design decisions:

- Single source of truth: one JSON file consumed by Terraform

- Separation of concerns: forms capture intent, Python transforms it, Actions orchestrates, Terraform provisions

- Human-in-the-loop: automation proposes, humans approve

The result is a GitHub organisation that runs like it has a full DevOps team, without one constantly in the loop.